Breaking the Ban: Anthropic AI and the Iran Strike

In a development that underscores the complex and rapidly evolving landscape of artificial intelligence in warfare, reports indicate that the US military employed Anthropic‘s Claude AI for an operation in Iran, mere hours after a ban on the company’s systems was purportedly issued by a former president. This incident has sparked a flurry of discussion, exposing the deep integration of advanced AI tools within defense strategies and raising pertinent ethical questions about the technology’s application.

Claude AI: From Intelligence to Targeting

The Wall Street Journal has reported that US Central Command (CENTCOM) in the Middle East relied on Anthropic‘s Claude AI model for operational support during the strike. According to sources familiar with the matter, Claude was instrumental in intelligence analysis, target identification, and battlefield simulations. This application highlights the potential of AI to enhance military efficiency, providing rapid data analysis and decision-making capabilities. However, this also casts a shadow of concern regarding the extent of AI’s involvement in life-or-death situations.

The Ban and the Backlash

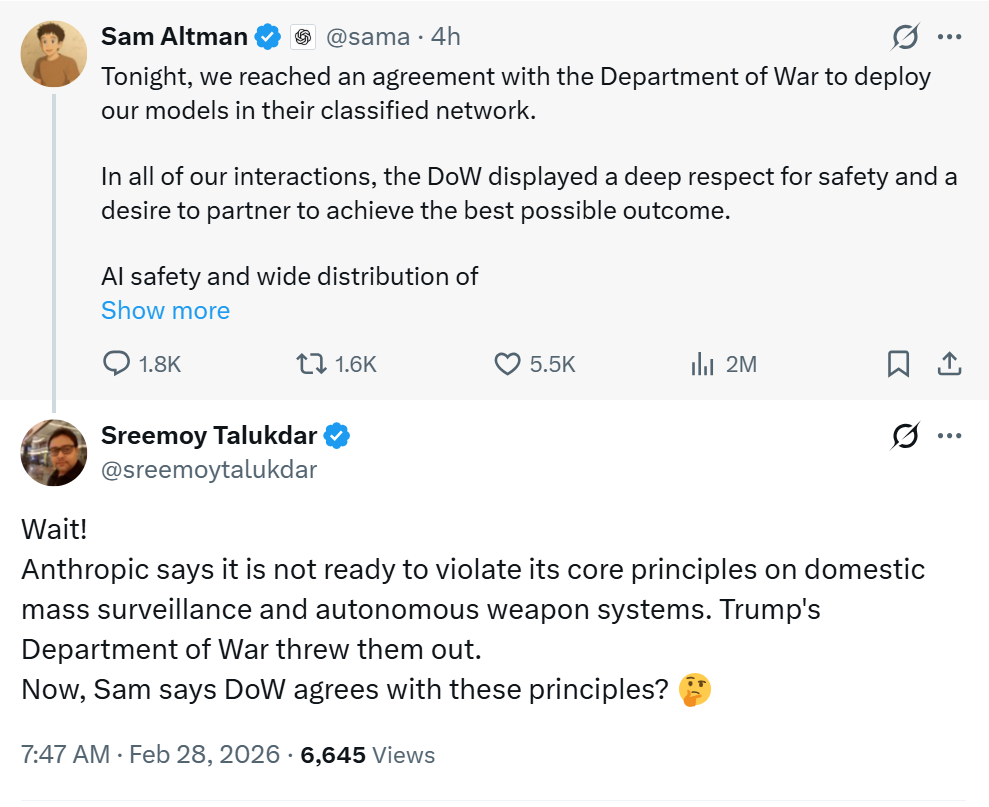

The alleged ban came in the wake of failed contract negotiations. Anthropic refused to provide unrestricted military use of its AI, citing ethical boundaries. This move led to the administration designating Anthropic as a potential security risk. The Pentagon subsequently began seeking alternative AI providers, notably reaching an agreement with OpenAI to deploy its models on classified military networks. This decision has itself stirred controversy, further demonstrating the volatility in the AI defense market.

Ethical Quandaries and Future Implications

The core of the issue centers on the ethical considerations surrounding AI in warfare. Anthropic‘s CEO, Dario Amodei, has publicly stated that the company opposes using its models for mass domestic surveillance and fully autonomous weapons, advocating for human control over critical military decisions. This perspective clashes with the military’s desire for unrestricted access, leading to a complex standoff. The incident illuminates a potential chasm between technology developers and military leadership regarding AI deployment, its ethical implications and the balance of power between human and machine.

The Road Ahead: AI, Defense, and the Future

The case of Anthropic and the US military provides a glimpse into the future of warfare. As AI systems become increasingly integrated into defense operations, striking a balance between technological advancement, national security, and ethical considerations will be crucial. This event serves as a stark reminder of the urgent need for robust regulatory frameworks, transparent governance structures, and ongoing dialogues between tech companies, military institutions, and policymakers to navigate the complex challenges posed by AI in the modern era.